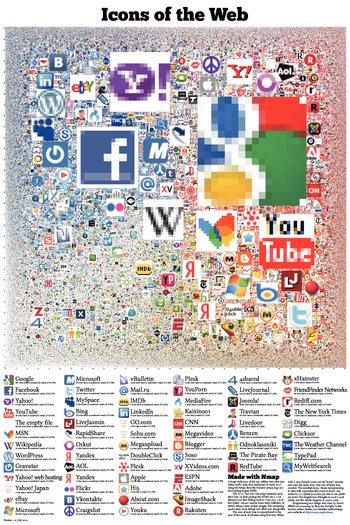

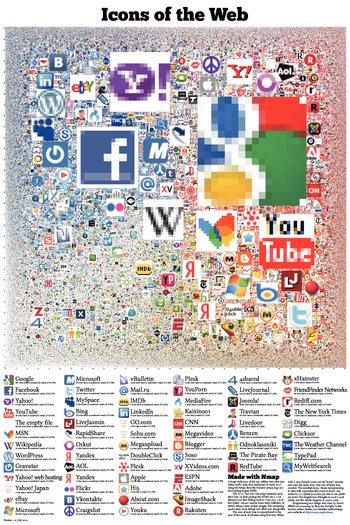

The graphic has been made into a 24x36 inch poster. Click to see a larger version.

The graphic has been made into a 24x36 inch poster. Click to see a larger version.

%s: Not found.

" % cgi.escape(prefix) else: x_f = float(loc.x + X_OFFSET) / RESOLUTION y_f = float(Y_OFFSET - loc.y) / RESOLUTION print "%s: The icon is at (%s, %s) and is %d × %d pixels.

" % (cgi.escape(prefix), fmt_float(x_f), fmt_float(y_f), loc.size, loc.size) def lookup(base, hash): result = None filename = os.path.join(DATADIR, base, LOCATIONS_DIR, hash[0:2], hash[2:4]) try: f = open(filename, "r") except IOError, e: if e.errno == errno.ENOENT: return None else: raise else: for line in f: h, l, t, s = line.split() if h == hash: l = int(l) t = int(t) s = int(s) result = loc(l + s / 2, t + s / 2, s * 2) break f.close() return result def lookup_domain_single(domain, filename): f = open(filename, "r") try: for line in f: host, hash = line.strip().split("\t") if host == domain: return hash finally: f.close() def lookup_domain_aux(domain, dir, remaining): if len(remaining) == 0: return lookup_domain_single(domain, os.path.join(dir, "term")) else: k, remaining = remaining[0], remaining[1:] filename = os.path.join(dir, k) if os.path.isdir(filename): return lookup_domain_aux(domain, filename, remaining) else: return lookup_domain_single(domain, filename) def collapse_domain(domain): r = [] for c in domain.lower(): if c.isalnum(): r.append(c) return "".join(r) def lookup_domain(base, domain): remaining = collapse_domain(domain) return lookup_domain_aux(domain, os.path.join(DATADIR, base, DOMAINS_DIR), remaining) def handle_md5(hash): alexa_loc = lookup("alexa", hash) print_loc("MD5 lookup", alexa_loc) return alexa_loc def handle_coords(coords): x, y = coords x = int(x * RESOLUTION + 0.5) - X_OFFSET y = Y_OFFSET - int(y * RESOLUTION + 0.5) return loc(x, y, 16) def get_url(url): t = timer() print "" print "%s" % cgi.escape(url) sys.stdout.flush() t.start() try: root = urllib2.urlopen(url, None) except Exception, e: print " failed: %s." % cgi.escape(str(e)) print "

" return None, None if root.geturl() != url: print " → %s" % cgi.escape(root.geturl()) sys.stdout.flush() data = root.read(10000) t.end() print " %d bytes in %.2f seconds.%s only http and https URLs are supported.

" % cgi.escape(url) return url = urlparse.urlunparse(parts) alexa_loc = None hash, url = get_url(url) if hash: alexa_loc = lookup("alexa", hash) print "Why not found? See the FAQ for more information.

""" return alexa_loc def handle_query(q): coords = split_coords(q) if is_md5(q.upper()): return handle_md5(q.upper()) elif coords: return handle_coords(coords) else: return handle_url(q) def box(n, low, high): if n < low: return low elif n > high: return high else: return n def zoom_for_size(s): """Return a good zoom level for an icon of the given size.""" return box(int(math.log(float(s) / AUTOZOOM_SIZE, 2) + 0.5), 0, 6) form = cgi.FieldStorage() q = form.getfirst("q") print "Content-type: text/html\r" print "Connection: close\r" print "\r" print """\A large-scale scan of the top million web sites (per Alexa traffic data) was performed in early 2010 using the Nmap Security Scanner and its scripting engine. As seen in the New York Times, Slashdot, Gizmodo, Engadget, and Telegraph.co.uk ...

We retrieved each site's icon by first parsing the HTML for a link tag and then falling back to /favicon.ico if that failed. 328,427 unique icons were collected, of which 288,945 were proper images. The remaining 39,482 were error strings and other non-image files. Our original goal was just to improve our http-favicon.nse script, but we had enough fun browsing so many icons that we used them to create the visualization below.

The area of each icon is proportional to the sum of the reach of all sites using that icon. When both a bare domain name and its "www." counterpart used the same icon, only one of them was counted. The smallest icons--those corresponding to sites with approximately 0.0001% reach--are scaled to 16x16 pixels. The largest icon (Google) is 11,936 x 11,936 pixels, and the whole diagram is 37,440 x 37,440 (1.4 gigapixels). Since your web browser would choke on that, we have created the interactive viewer below (click and drag to pan, double-click to zoom, or type in a site name to go right to it).

""" sys.stdout.flush() alexa_loc = None if q: alexa_loc = handle_query(q) print """\ """ % (cgi.escape(q and q or "", True)) print """\ """ print """\ """ print """\

The graphic has been made into a 24x36 inch poster. Click to see a larger version.

The graphic has been made into a 24x36 inch poster. Click to see a larger version.

We have only printed 15 posters (for Nmap developers) so far, but we're considering an offset print run if there is enough demand. If you might be interested in buying a physical copy of the poster, please fill out this short form:

For downloads of programs and data files, go to this page.

Programming and design was done by David Fifield and scanning performed by Brandon Enright.